Falling in Love with the CLI AI Harness

For all of last year, I wondered why anyone would use the CLI version of Claude Code and similar tools. I asked a colleague about this a couple of times, and still had trouble getting it.

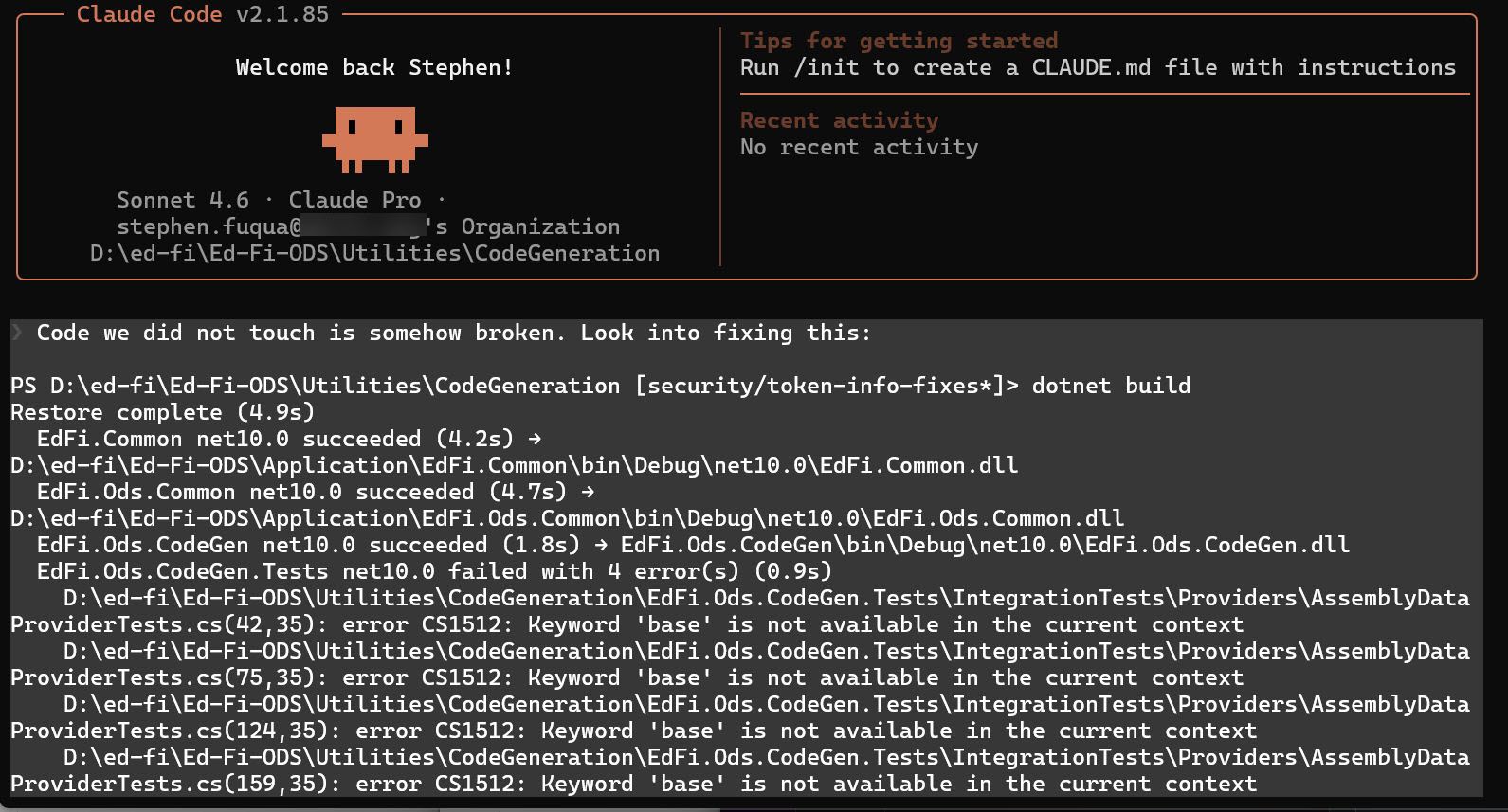

Until I sat down and used the CLI to perform my work. As a knowledge worker, if you haven't used copilot or claude or (favorite tool) in the terminal, you are truly missing out.

🔝 Top 3 reasons I like this experience: